Introduction

Automatic Speech Recognition (ASR) has made significant strides over the last decade, but most ASR models on the market offer general-purpose transcription. They perform well in clean, controlled environments but break down when handling:

- Technical jargon & acronyms – Standard ASR models fail to recognize niche terminology used in most industries (i.e., medical terms, manufacturing terms, etc.).

- Noisy industrial settings – Background noise, overlapping speech, and other real-world conditions that degrade transcription quality.

- Lack of real-time adaptability – Most ASR models require extensive retraining to work effectively in new domains.

Jargonic, aiOla’s new foundation model for ASR, solves these issues through advanced domain adaptation, real-time contextual keyword spotting, and zero-shot learning, allowing it to handle industry-specific language out-of-the-box and allow real-world enterprise deployment.

How Jargonic Works

Jargonic leverages a state-of-the-art ASR architecture, designed for enterprise-scale applications, ensuring superior robustness and precision, especially with specialized industry vocabulary.

Instead of relying on extensive fine-tuning, Jargonic employs a context-aware adaptive learning mechanism that allows it to recognize domain-specific terminology without retraining. The jargon terms are detected by a proprietary keyword spotting (KWS) mechanism that is deeply integrated into the ASR architecture. Unlike standard ASR models that require manually curated vocabulary lists, Jargonic learns and auto-adapts to industry-specific terminology through its inference pipeline. That is, the keyword does not need to be given acoustically, and no further training or fine-tuning is needed for introducing the system with new keywords (e.g., jargon terms).

Combining Keyword Spotting with ASR

Jargonic’s approach integrates a proprietary KWS mechanism with advanced speech recognition in a two-stage architecture. First, the proprietary KWS system identifies the presence of domain-specific terms within the audio stream. Then, this contextual information is fed into the core ASR engine through an adaptive layer, effectively steering the model’s generation towards the relevant domain context.

This architecture allows Jargonic to achieve superior accuracy for general speech while also handling specialized vocabulary recognition. The KWS system is zero-shot and can be instantly reconfigured for different industry vocabularies by simply providing a new list of keywords, enabling flexibility across any domain with heavily jargonized speech. Through this approach, Jargonic improves overall accuracy for audio samples containing industry-specific terms while eliminating the resource-intensive retraining typically required for domain adaptation.

Innovative Noise Robustness for Multilingual Speech Recognition

Jargonic was trained using a proprietary noise-handling approach that works independently across languages. Unlike conventional approaches, we developed a specialized data enrichment process that utilizes various types of industrial noise under different conditions – both with and without speech present. This method is language-independent, allowing our model to maintain consistent performance regardless of which language is being processed. Traditional noise robustness techniques often add basic white noise or reverberation patterns primarily optimized for English, which can negatively impact the model’s ability to generalize performance improvements when processing other languages like Japanese or German. Our approach avoids this limitation by using real-world noise profiles from industrial settings and a pipeline that generalizes effectively across our entire language suite, ensuring reliable transcription even in the challenging acoustic environments found in manufacturing floor settings, for example.

Performance Benchmarks

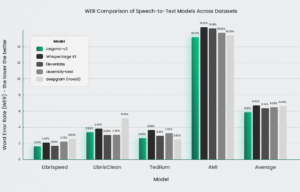

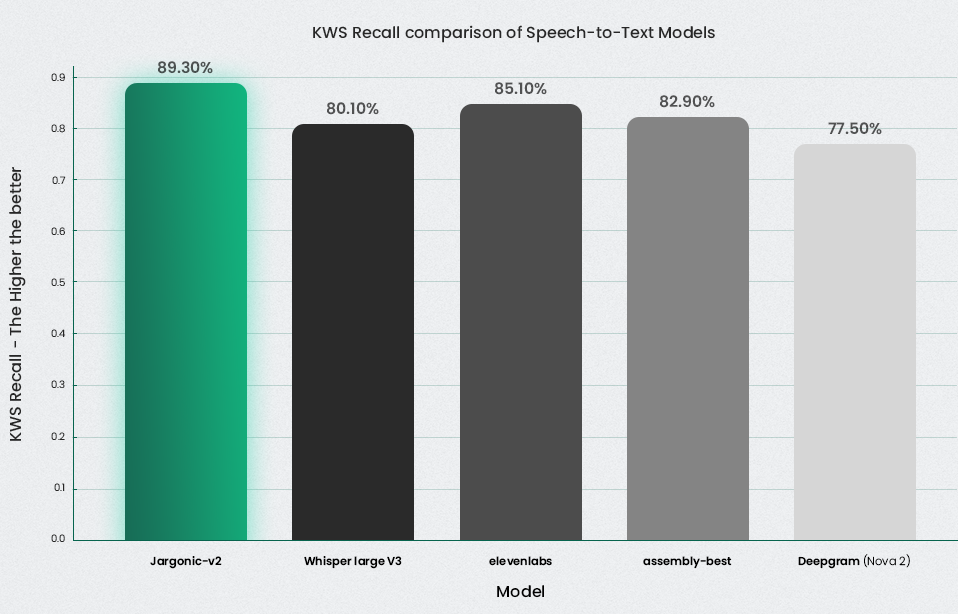

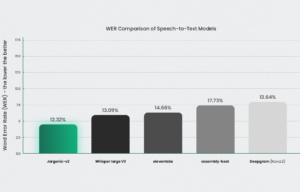

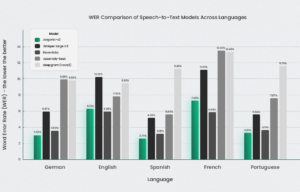

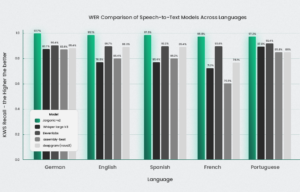

The graphs compare the performance of Jargonic V2 against OpenAI Whisper (v3), DeepGram, AssemblyAI, and ElevenLabs across multiple languages. One figure illustrates the Word Error Rate (WER), where lower values indicate better performance, while the other shows Recall on jargon terms, where higher values are preferred. Overall, Jargonic V2 achieves superior WER across most languages and datasets, and it consistently outperforms all other models in keyword detection and transcription.

Jargonic V2 achieves strong results even without the keyword spotting mechanisms. Figure 3 presents the results for the English language across various English datasets. It shows that, in most cases, Jargonic V2 outperforms competitors, demonstrating superior performance in the majority of test cases and maintaining the highest average performance across all benchmarks.